Quiz report enhancements

Moodle 2.0

This document describes a package of work relating to the quiz reports that we (The Open University) hope to outsource. Please discuss this proposal in this forum thread.

The work falls into four areas: improvements to the Item Analysis report; improvements to the Overview report; improvements to the Regrade report; and general improvements and bug fixing. Some of the general improvements may be necessary to enable the other two tasks to be done. The remainder are things that it would be nice to have fixed while this code is being worked on to improve the long-term maintainability, providing that does not add too much to the development cost.

The Open University uses the four standard quiz reports, and the third-party Detailed Responses report. (If, as a result of this work, the Detailed responses report becomes reliable enough, we may move it back into core Moodle. In fact, I think this is quite likely.)

All the work done must conform to our general requirements for outsourced work. We would like all this work contributed directly into Moodle 2.0, as well as provided to us as a patch we can apply against our own Moodle, which is currently Moodle 1.9 with customisations.

Improvements to the Item Analysis report

I propose that we break up the current item analysis report into two separate bits, an overview for the whole quiz, and then drill-down into the details for each question. I propose we call these the "Test Statistics report" and the "Individual Item Analysis report". Better suggestions are welcome.

Calculation details

I will describe the details of the calculations of the various statistic on a separate, maths-heavy, page Quiz_item_analysis_calculations. Then this page will just refer to those statistics and say which is displayed where. The statistics fall into three categories:

- statistics that are properties of the whole test;

- statistics that are properties of a particular position in the test;

- statistics that are properties of a particular test item.

The last two are different because of random questions, which mean that different students may have received different questions in the position "question 1".

Implementation of these calculations should be prime candidates for unit testing. I think in the code, we should try to separate

- Extracting the necessary data from the database

- Doing the calculations

- Displaying the results to users

However, we need to do it in a way that does not just load all the data into memory at once and crash the server!

Although the specification below says that all statistics should be displayed, wherever it can be done conveniently, the code should be structured so that if a particular Moodle installation does not like a particular statistic, it is easy to see which bits of code to comment out to hide that statistic. (It is probably overkill to make configuration options for this.)

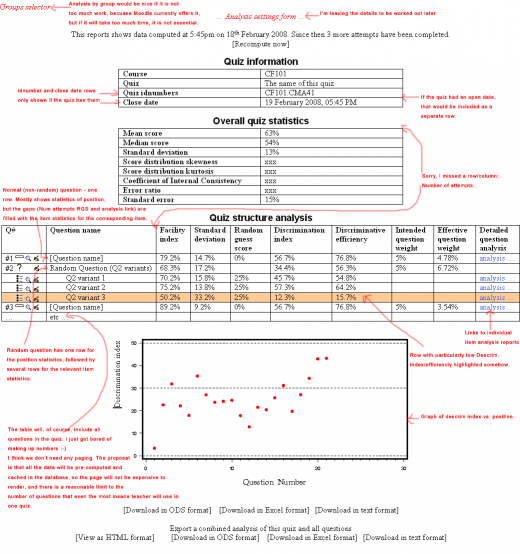

Quiz Statistics report

The mock-up on the right may help clarify our intentions.

This report has two sections. The first section is "Overall quiz statistics" which contains all the statistics that are properties of the test as a whole. For convenience, the course shortname, quiz open and close dates, and quiz name and idnumber (if set) should be repeated with these overall statistics.

The second section is "Quiz structure", containing a table. The table will have one column for each position statistic and each item statistic.

Where a position in the test is filled with a non-random question, there is a single row in the table with all the statistics.

Where a position is filled with a random question, there will be one row in the table giving the position statistics, followed by a number of rows giving the item statistics for each question that a student received in this position. (This means that, if there are two random questions picking from the same categorie(s), there may be repeated rows in the table.)

Positions or items that appear to be performing particularly badly (Discrimination coefficient below XXX? perhaps other conditions too?) should be highlighted. We don't yet have a clear picture what constitutes 'particularly badly', so structure the code so that it is clear which part you need to tweak to change this. For example, have a function is_dubious_question($row_of_data).

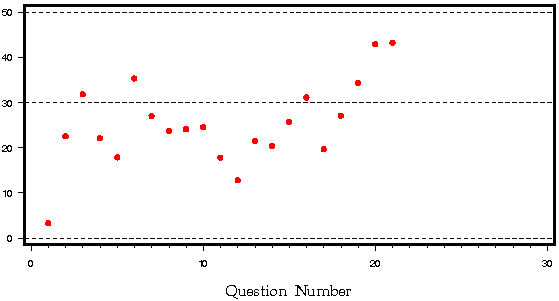

Our "Early warning test" generate a graph like this showing the discrimination coefficient of each question.

That is, the x-axis is test position, and the y-axis is Discrimination Index. We want a graph like this at the bottom of the report.

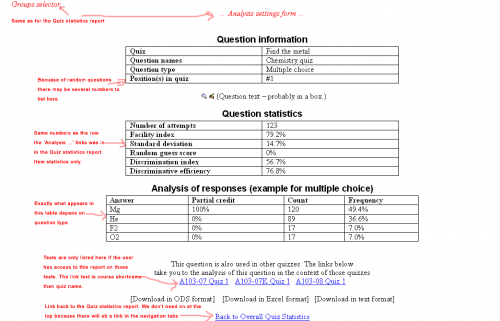

Individual Item Analysis report

The mock-up on the right may help clarify our intentions.

Each question in the overview analysis report should be a link to a more detailed analysis of that question.

At the top of the page, all of the item statistics relating to this question should be shown. (This information is repeated from the Test Statistics report.)

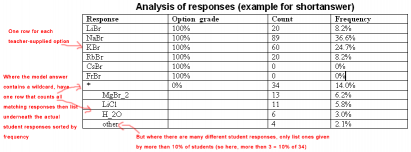

Then there is a section analysing all of the student responses, with frequency counts, like the middle columns of the existing Item Analysis report. However, we want this to be more sophisticated for shortanswer and numerical questions that use wildcards. Hopefully the illustration on the right makes this clear.

Beneath the details for a particular question, if the question is used in other quizzes (that the current user has reports access to) then we should provide cross links the detailed analysis of this question in those quizzes too. This effectively solves MDL-8974. (We may want to consider adding these links somewhere in the question bank interface too, perhaps on the question preview page?)

There should be a link back to the Quiz Statistics report.

(Note: I have a Word document that has examples of how we want this to look for various question types, but I don't think it adds much, so I have not copied it here. Also, there is an implementation decision to make about how much control we give individual question types about how their responses are analysed.)

(An interesting idea would be to draw a scatter plot of question score against test score at the bottom of this report. Discrimination index/efficiency is meant to be a measure of how good that correlation is, and a scatter plot would illustrate that nicely. However, it is probably beyond the scope of this work.)

Performance considerations

This report has to perform a lot of expensive calculations. Instead of re-computing this every time the report is viewed, we probably need to cache the computed values in a separate database table. This would rely on one of the general improvements described below.

At the top of both the Test Statistics and Individual Item Analysis reports, there should be a message, "This reports shows data computed at date and time. Since then XX more attempts have been completed." With a 'Recompute now' button.

The data will have to be cached separately for each possible combination of options.

When the recompute button is pressed, go to a progress bar for the duration of the calculation, then redirect back to the report.

Other minor improvements

Implement this suggestion: MDL-8464 In the item analysis report, give teachers an icon to edit the question. Do this in both the Test Statistics and Individual Item Analysis reports.

Export options

It should be possible to export each of the Test Statistics and Individual Item Analysis reports in XLS, ODS or CSV format.

In addition, there should be an options to export (as XLS, ODS, CSV or HTML), a single file that contains first the Test Statistics report, followed by the Individual Item Analysis report for each item in the test. This is so that the data can conveniently be taken away and analysed off-line. The HTML version of this combined report should look reasonably good when printed.

Improvements to the Overview report

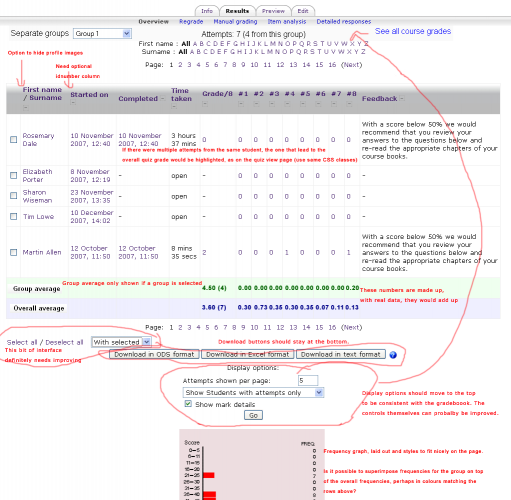

The mock-up on the right may help clarify our intentions.

Add group and course averages

Like for the gradebook reports, we should add a row showing the average over all students in the course (even if the current user is only allowed to see one group). And for a quiz in group mode, add another row above that giving group averages. These averages should match the ones in the gradebook, that is, they should be averages of the students' final scores, not the individual attempt scores.

Make it clear which student attempt gives the final grade

Like on the quiz view page, where a student has made multiple attempts, highlight the one that gives the final score, if the scoring method is first, last or highest score. Perhaps it would be useful to add an option to only show/export the 'final score' attempts.

Add the same highlighting/option to the detailed responses report.

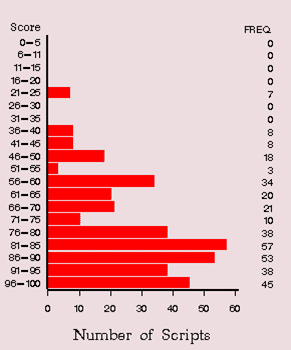

Add a summary graph

Our "Early warning test" (see above) generates a graph like this showing score frequencies for all students:

It should be possible to produce a graph like this using graphlib.php, and a single database query, so I think we should, and display it after the results table. It should count the number of attempts with a score in each of an appropriate number of score brackets, depending on the quiz total score.

Add a column for the user's idnumber, controllable by a site-wide setting

Add a site-wide configuration option "Show idnumber in quiz reports", defaulting to No. (Or maybe just use the existing setting grade_report_showuseridnumber in the Gradebook grader report settings.)

Change the overview report and the detailed responses report so that if this setting is turned on, you get an additional column containing each user's idnumber.

Add a site-wide setting to hide the user profile image from quiz reports

Add a site-wide configuration option "Hide user images in quiz reports", defaulting to No.

Change the overview report and the detailed responses report so that if this setting is turned on, you don't get the column with users' images.

Improvements to the Regrade report

At the moment, if you follow a link to the regrade report, it immediately does a regrade. This is dangerous. We want more control. This is MDL-5519. Completing this work should also deal with MDL-3032.

The workflow we would like to see for regrading is:

1. When you go to the regrade report, it should just show some introductory instructions and some controls for selecting particular users and/or questions. By default, it should be set up so that all users and questions are selected. There are then two buttons: 'Dry run' and 'Regrade'

2. If the 'Dry run' button is pressed, it does a pretend regrade of the selected questions for the selected users. This does not change anything in the database, instead it just tells you what would change if you did a regrade for real. The gives you further controls so that you can easily select a subset of the users and/or questions included in the dry run. In addition, the main controls from step 1 are also shown again so you can change things and do another 'Dry run'.

3. Clicking the 'Regrade' button actually performs the regrade, displaying what has been changed like in the dry run. This display should be more understandable than the one the regrade report currently provides.

General improvements and bug fixing

The quiz reports have not been substantially revised since at least Moodle 1.6. This means the conversion to roles and capabilites was somewhat superficial, and the interface has not been converted to formslib. Elsewhere in Moodle there have been improvements that these reports could benefit from, for example the moodle_url class, and some of the features implemented for the new gradebook reports in 1.9.

Bringing the quiz reports up to the standard expected in Moodle 1.9/2.0 would greatly simplify long-term maintenance of these reports, and so reduce costs long term. The will also mean that people who learn how to write Gradebook reports will then easily be able to write Quiz reports, and vice versa.

On the other hand, not all of these requirements are critical to the OU, so please provide a separate estimate for each one. Then we will decide which ones we actually want to include in the outsourcing contract.

Enhance how quiz reports work to match recent developments in the gradebook

In Moodle 1.9, gradebook reports are fully-featured plugins with a db folder, so they can create tables if they need to store their own data, or define capabilities. Also there is a nice mechanism that allows gradebook reports to add configuration settings to the admin tree, and they use get/set_user_preferences to store users' options for viewing the reports (perhaps some of these preferences, like records per page, should be shared between the reports). We would like quiz reports enhanced so quiz report developers have the same facilities available.

Using the ability to define new capabilities, we would like a separate 'view' capability (e.g. quizreport/overview:view) for each report, to replace the existing mod/quiz:viewreports capability, so we can choose which roles can see which reports.

Switch to using formslib and improve the interface

The current user interface for controlling the reports is not very well thought out, so don't just reproduce the existing interface using formslib. Devise a new one by thinking about what teachers actually want to achieve using these reports, and which is as consistent as possible between reports.

Switch to using moodle_url

The quiz reports are prime candidates for using moodle_url to clean up the code and eliminate uses of $SESSION.

Refactoring opportunities

There is some common functionality between reports, for example the interface and SQL for determining which users' attempts are included in the reports. This could usefully be moved into a mod/quiz/report/lib.php file, or something similar.

Most of the reports have parallel code paths with very similar code to do the on-screen display and the export to different formats. We should really improve tablelib so that this sort of duplicate code is not necessary.

Developer documentation

Having worked on these reports, you will probably know more about quiz reports than anyone else, so please write the docs page How to write a quiz report plugin.

Performance testing

The Open University has 400,000 users and 3,000 courses in its database. Our largest courses have around 5,000 students, often in groups of about 20 (so up to 250 groups on a course). Naturally some operations may be slow, but all operations should scale to this sort of sized dataset. (Naturally our hardware is quite powerful to help cope with this.)

[https://tracker.moodle.org/browse/CONTRIB-183 CONTRIB-183 Detailed responses report does not cope with lots of data] is one instance of this.

Perhaps one change we should make it to use get_recordsets, instead of get_records, where possible in the reports, to reduce memory requirements, particularly when exporting large datasets.

[https://tracker.moodle.org/browse/MDL-7772 MDL-7772] Not all combinations of "Show ..." and Groups settings work properly in the overview report

The bug has plenty of details of the various cases, and what they should mean.

This is one of the places that may need more thought since Roles and capabilities were introduced in Moodle 1.7. (At the moment, the quiz considers someone a 'Student' if they have the mod/quiz:attempt capability.)

Similar controls appear on some of the other reports. These need to be tested and fixed too.

[https://tracker.moodle.org/browse/MDL-12418 MDL-12418] Problems with quiz item analysis and TeX-filter

This is just a bug and needs to be fixed. MDL-12258 is probably the same issue and needs to be fixed too. There is some useful discussion in MDL-12369.

[https://tracker.moodle.org/browse/MDL-12547 MDL-12547] Manual grading report does not take any notice of groups

It would be useful if group mode could be added to this report. The bug has a proposed fix from Karlene Clapp, which needs to reviewed before being checked in.

[https://tracker.moodle.org/browse/MDL-12392 MDL-12392] Manual grading report does not recognise global role assignments of students

Similar to MDL-7772.

[https://tracker.moodle.org/browse/MDL-12824 MDL-12824] Essay type of quiz questions needs to color mark which students have been graded and which have NOT

Hopefully easy.

[https://tracker.moodle.org/browse/MDL-13427 MDL-13427] Export Item Analysis Table to Files (excel or text format) Including

Tags

Just a bug.

[https://tracker.moodle.org/browse/MDL-13428 MDL-13428] When a quiz attempt is reviewed in "Item Analysis Table"; the general comments on Essay appears in the "answer" block

Seems weird, could you have a quick look please.

[https://tracker.moodle.org/browse/MDL-13429 MDL-13429] The "Go" button on "Item Analysis Table" page in moodle 1.8.x keeps moving once clicked

I remember fixing this in another report. There was some really weird HTML and CSS. I think that while working on reports and making the layout more consistent, you will just fix this in passing.

[https://tracker.moodle.org/browse/MDL-13678 MDL-13678] Change default number of rows per page on quiz reports

We want a default that is most useful to teachers. 30 is a typical class size. I think the current value of 10 is so that it fits on one screen on a typical monitor, but I don't think that is a good reason for keeping it at 10.

Some background to assessment at the OU

To help understand the rationale behind some of the above requirements I am adding this section which gives a bit of context about how the Moodle quiz is used at the OU. Recall that the OU is entirely a distance learning university.

Courses

Generally, our courses are produced over a period of a year or two by academic staff in our Faculties. Then, only when they are finished, do we present them to students. Typically there is one presentation per year of each course (but sometimes more or less). Each presentation of a course gets a new Moodle course, created using backup and restore without student data of the previous presentation's Moodle course. During presentation, the academic staff are responsible for settings exams and assessments, and updating the course if necessary. Typically a course will last for 5-10 years before being rewritten.

Popular first level courses can have several thousand students enrolled in each presentation. Many higher-level undergraduate courses have a few hundred per presentation. Obscure post-graduate courses have tens of students. There are about 600 course-presentations per year.

During their studies of a course students are directly supported by a tutor, who answers any questions they have, moderates online activities, and marks their assessed work. Due to UK data protection law, Tutors are only allowed to see the work of their own students, however, they may see aggregate statistics for the whole cohort of students.

In Moodle, we achieve this using groups. A tutor and their students go into a group, and then certain activities in the course are set to Separate groups mode. Sometimes, one Tutor will be responsible for two separate Tutor groups. In this case, in Moodle, they see the groups dropdown, so they only look at one group at a time. That is how we want it to work.

Students are sometimes divided into groups in different ways for different activities, using the groupings feature. In this case, the quiz will be set to use the 'Tutor Groups' grouping.

Significant roles

Course staff

The Academic and Administrative staff who produce the course (and the administrative staff who assist them) get a role in Moodle called Course Staff. This is very close to the (editing) Teacher role.

Course staff will be able to view any of the quiz reports.

Tutors

Guide students through their students. This role is close to the Moodle Non-editing Teacher role, but crucially without the moodle/site:accessallgroups capability, because Tutors may only see data for Students in their own tutor group (apart from aggregate data like whole-course averages).

We expect Tutors will only have the capability to see the Overview and Detailed responses reports; and the Manual grading report, if that particular quiz requires it.

Students

As normal.

Will not be able to access any quiz reports.

Use of quizzes

As mentioned above, quizzes are normally in separate groups mode, Tutors can only see the results of Students in their group(s).

Virtually none of our quizzes use a time-limit. We don't use any of the 'security' options. We don't use 'Shuffle questions', but we sometimes use 'Shuffle within questions'. Sometimes we use adaptive mode. Sometimes we don't.

There are two common ways we use quizzes

Summative quizzes (for credit)

These will be set up with an Open and Close date. Students will be allowed one attempt only. Students will probably not be allowed to see scores until after the close date.

One common set up is for each question to by chosen randomly from a category with several variants. So you might have category "CMA41: Q1 variants" containing several questions Q1-V1, Q1-V2, Q1-V3 that are very similar, but different (for example different numbers in a calculation). Then the first question in the quiz is then a random question picking from that category. Then you have another category with variants of question 2, etc.

Formative quizzes (self-assessment)

No open or close dates. Unlimited attempts. Students given results immediately. May or may not use random questions.

Previous systems

For many years, the OU had a paper-based Computer Marked Assessment system - the type where students answer multiple-choice questions by filling in a form with pencil that was then scanned into a computer. That system had an "Early warning test" to analyse results, and part of the purpose of this work is to ensure that Moodle can do all the things that system did.

OU VLE general requirements for outsourcing

(Copied from our internal wiki 3rd December 2007.)

- Code must be developed against the Moodle 1.9 release version.

- The contractor should do a design describing, where applicable, any

- database changes

- capabilities will be created/used to control access to different features

- interface mock-ups

- APIs

- file formats

This must be agreed with the Open University before proceeding to implementation. (It does not have to be a long document. If the feature being developed is very simple then the design might also be very brief.)

- The Moodle coding guidelines must be followed. In particular, the development must use:

- PHP QuickForm (introduced in Moodle 1.8) rather than the old HTML forms.

- Capabilities (from the role/capability system introduced in Moodle 1.7) to control access to features, rather than isteacher() etc.

- XMLDB install scripts for database tables.

- Language files rather than hard-coded strings (except for error messages that are not expected to appear to users under normal circumstances; these can be hard-coded if desired).

- Additional libraries, beyond those already included in the moodle code base, can only be used if this is agreed with the Open University in advance. The new libraries be placed in the main lib directory rather than within the developed solution. Where suitable functionality already exists within the moodle code base, those libraries should be used in preference new ones, to reduce long-term maintenance and support costs.

- Any customization of the moodle user interface should be contained within the developed solution unless agreed in advance with the Open University.

- Must develop and test against a Moodle install using the following databases:

- PostgreSQL.

- Microsoft SQL Server. (A free limited version, SQL Server Express Edition is available for testing.)

- The code must be delivered as a patch in standard (universal) format that applies to Moodle 1.9. (This does not preclude the changes being committed directly to the Moodle CVS repository too.)

- the patch should be minimal. That is, it should not change things like whitespace in parts of a file that were not otherwise touched.

- If multiple outsourced tasks affect the same area of code then they can be combined into one patch, or the order of application should be noted.

- Patches should be checked against a current CVS version Moodle 1.9.x+ (MOODLE_19_STABLE) installation (to make sure they at least apply successfully) before handover.

- In addition to the code, we also require a functionality document that describes how the code works. This will be used by our testers, and as a basis for user documentation. Normally, this will be very close to the original specification, but where things changed during implementation, the functionality document needs to be updated. This is in addition to the normal Moodle help-files that should be included in the code.