Gegenseitige Beurteilung nutzen

![]() Diese Seite ist noch nicht vollständig übersetzt.

Diese Seite ist noch nicht vollständig übersetzt.

Workshop-Phasen

Der Ablauf in einem Workshop kann als eine Abfolge von fünf verschiedenen Phasen betrachtet werden. Eine Workshop-Aktivität kann sich über mehrer Tage oder Wochen erstrecken. Als Trainer/in legen Sie fest, wann der Übergang zu einer neuen Phase erfolgt.

Der typische Ablauf einer Workshop-Aktivität ist wie folgt: Anlegen und konfigurieren, Einreichen der Arbeiten, Beurteilung durch die Teilnehmer/innen, Bewertung durch die Trainer/innen, Abschluss.

Anmerkung: Fortgeschrittene können auch mehrfach zwischen den Phasen Einreichen und Beurteilen wechseln (iterativer Prozess).

Der Fortschritt in der Workshop-Aktivität wird im sogenannten Workshop-Planungswerkzeug visualisiert. Dieses Werkzeug zeigt alle Phasen an, hebt die aktuelle Phase optisch hervor. Es listet alle Aufgaben auf, die in der aktuellen Phase anstehen und zeigt an, welche davon bereits erledigt sind und welche noch anstehen.

Vorbereitungsphase

In dieser Phase legen Sie als Trainer/in die Aktivität an und nehmen Konfigurationseinstellungen vor. In diese Phase wechseln Sie auch, wenn Sie Änderungen an den Einstellungen vornehmen wollen.

Die Kursteilnehmer/innen können in dieser Phase gar nichts tun: weder Arbeiten einreichen, noch Arbeiten beurteilen.

Workshop-Einführung verfassen

Dies tun Sie in den Workshop-Einstellungen im Abschnitt Grundeinträge, Textbereich Einführung.

Auftrag für das Einreichen verfassen

Dies tun Sie in den Workshop-Einstellungen im Abschnitt Einstellungen Einreichungen, Textbereich Anweisungen für das Einreichen.

Beurteilungsbogen bearbeiten

- Link anklicken

- Je nach Punktestrategie anderes Formular siehe Workshop-Einstellungen im Abschnitt Punkteeinstellungen Einreichungen

Beispieleinreichungen erstellen

- Wenn Assessmentform Beispieleinreichungen vorsieht, siehe Workshop-Einstellungen im Abschnitt Workshop-Funktionen

- Button Beispieleinreichung hinzufügen

- Datei(en) hochladen

Am Ende der Vorbereitungsphase müssen alle Checkboxen abgehakt sein.

Einreichungsphase

In dieser Phase reichen die Kursteilnehmer/innen ihre Arbeiten ein. In den Workshop-Einstellungen können Sie einen begrenzten Zeitraum (Beginn, Ende, beides) festlegen, in dem Arbeiten eingereicht werden können.

Beurteilungsphase

Wenn Sie in den Workshop-Einstellungen das gegenseitige Beurteilen (Peer-Assessment) aktiviert haben, dann beurteilen die Kursteilnehmer/innen in dieser Phase die Arbeiten ihrer Kurskolleg/innen, die ihnen zur Bewertung zugewiesen wurden. Auch für diese Phase können Sie als Trainer/in in den Workshop-Einstellungen einen festen Zeitraum (Beginn, Ende, beides) definieren.

Bewertungsphase

In dieser Phase ermitteln Sie als Trainer/in die Gesamtbewertung für jede/n einzelne/n Kursteilnehmer/in. Diese Gesamtbewertung setzt sich zusammen aus der Bewertung für die eingereichte Arbeit (Teilleistungen "Einreichung") und der Bewertung ihrer Beurteilungen der Arbeiten anderer (Teilleistung "Beurteilung"). Sie bestimmen für jeden die Gesamtbewertung und geben Feedback zu den Arbeiten und den Beurteilungen. Außerdem können Sie ausgewählte Arbeiten veröffentlichen, so dass sie für alle Kursteilnehmer/innen als Beispiele sichtbar sind.

Geschlossen

Wenn Sie in diese Phase umschalten, dann werden alle Bewertungen / Noten/ Punkte in die Bewertungsübersicht des Kurses übertragen.

Die Kursteilnehmer/innen können in dieser Phase ihre eigenen Arbeiten, ihre Beurteilungen und veröffentlichte Arbeiten anderer sehen.

Workshop-Bewertung

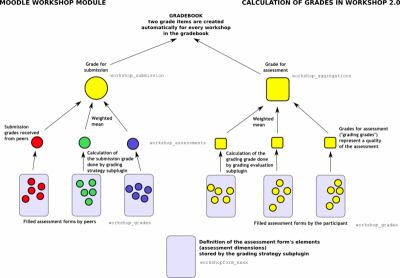

The grades for a Workshop activity are obtained gradually at several stages and then they are finalized. The following scheme illustrates the process and also provides the information in what database tables the grade values are stored.

As you can see, every participant gets two numerical grades into the course Gradebook. During the Grading evaluation phase, course facilitator can let Workshop module to calculate these final grades. Note that they are stored in Workshop module only until the activity is switched to the final (Closed) phase. Therefore it is pretty safe to play with grades unless you are happy with them and then close the Workshop and push the grades into the Gradebook. You can even switch the phase back, recalculate or override the grades and close the Workshop again so the grades are updated in the Gradebook again (should be noted that you can override the grades in the Gradebook, too).

During the grading evaluation, Workshop grades report provides you with a comprehensive overview of all individual grades. The report uses various symbols and syntax:

| Value | Meaning |

|---|---|

| - (-) < Alice | The is an assessment allocated to be done by Alice, but it has been neither assessed nor evaluated yet |

| 68 (-) < Alice | Alice assessed the submission, giving the grade for submission 68. The grade for assessment (grading grade) has not been evaluated yet. |

| 23 (-) > Bob | Bob's submission was assessed by a peer, receiving the grade for submission 23. The grade for this assessment has not been evaluated yet. |

| 76 (12) < Cindy | Cindy assessed the submission, giving the grade 76. The grade for this assessment has been evaluated 12. |

| 67 (8) @ 4 < David | David assessed the submission, giving the grade for submission 67, receiving the grade for this assessment 8. His assessment has weight 4 |

| 80 ( |

Eve's submission was assessed by a peer. Eve's submission received 80 and the grade for this assessment was calculated to 20. Teacher has overridden the grading grade to 17, probably with an explanation for the reviewer. |

Grade for submission

The final grade for every submission is calculated as weighted mean of particular assessment grades given by all reviewers of this submission. The value is rounded to a number of decimal places set in the Workshop settings form.

Course facilitator can influence the grade for a given submission in two ways:

- by providing their own assessment, possibly with a higher weight than usual peer reviewers have

- by overriding the grade to a fixed value

Grade for assessment

Grade for assessment tries to estimate the quality of assessments that the participant gave to the peers. This grade (also known as grading grade) is calculated by the artificial intelligence hidden within the Workshop module as it tries to do typical teacher's job.

During the grading evaluation phase, you use a Workshop subplugin to calculate grades for assessment. At the moment, only one subplugin is available called Comparison with the best assessment. The following text describes the method used by this subplugin. Note that more grading evaluation subplugins can be developed as Workshop extensions.

Grades for assessment are displayed in the braces () in the Workshop grades report. The final grade for assessment is calculated as the average of particular grading grades.

There is not a single formula to describe the calculation. However the process is deterministic. Workshop picks one of the assessments as the best one - that is closest to the mean of all assessments - and gives it 100% grade. Then it measures a 'distance' of all other assessments from this best one and gives them the lower grade, the more different they are from the best (given that the best one represents a consensus of the majority of assessors). The parameter of the calculation is how strict we should be, that is how quickly the grades fall down if they differ from the best one.

If there are just two assessments per submission, Workshop can not decide which of them is 'correct'. Imagine you have two reviewers - Alice and Bob. They both assess Cindy's submission. Alice says it is a rubbish and Bob says it is excellent. There is no way how to decide who is right. So Workshop simply says - ok, you both are right and I will give you both 100% grade for this assessment. To prevent it, you have two options:

- Either you have to provide an additional assessment so the number of assessors (reviewers) is odd and workshop will be able to pick the best one. Typically, the teacher comes and provide their own assessment of the submission to judge it

- Or you may decide that you trust one of the reviewers more. For example you know that Alice is much better in assessing than Bob is. In that case, you can increase the weight of Alice's assessment, let us say to "2" (instead of default "1"). For the purposes of calculation, Alice's assessment will be considered as if there were two reviewers having the exactly same opinion and therefore it is likely to be picked as the best one.

Backward compatibility note: In Workshop 1.x this case of exactly two assessors with the same weight is not handled properly and leads to wrong results as only the one of them is lucky to get 100% and the second get lower grade.

It is very important to know that the grading evaluation subplugin Comparison with the best assessment does not compare the final grades. Regardless the grading strategy used, every filled assessment form can be seen as n-dimensional vector or normalized values. So the subplugin compares responses to all assessment form dimensions (criteria, assertions, ...). Then it calculates the distance of two assessments, using the variance statistics.

To demonstrate it on example, let us say you use grading strategy Number of errors to peer-assess research essays. This strategy uses a simple list of assertions and the reviewer (assessor) just checks if the given assertion is passed or failed. Let us say you define the assessment form using three criteria:

- Does the author state the goal of the research clearly? (yes/no)

- Is the research methodology described? (yes/no)

- Are references properly cited? (yes/no)

Let us say the author gets 100% grade if all criteria are passed (that is answered "yes" by the assessor), 75% if only two criteria are passed, 25% if only one criterion is passed and 0% if the reviewer gives 'no' for all three statements.

Now imagine the work by Daniel is assessed by three colleagues - Alice, Bob and Cindy. They all give individual responses to the criteria in order:

- Alice: yes / yes / no

- Bob: yes / yes / no

- Cindy: no / yes / yes

As you can see, they all gave 75% grade to the submission. But Alice and Bob agree in individual responses, too, while the responses in Cindy's assessment are different. The evaluation method Comparison with the best assessment tries to imagine, how a hypothetical absolutely fair assessment would look like. In the Development:Workshop 2.0 specification, David refers to it as "how would Zeus assess this submission?" and we estimate it would be something like this (we have no other way):

- Zeus 66% yes / 100% yes / 33% yes

Then we try to find those assessments that are closest to this theoretically objective assessment. We realize that Alice and Bob are the best ones and give 100% grade for assessment to them. Then we calculate how much far Cindy's assessment is from the best one. As you can see, Cindy's response matches the best one in only one criterion of the three so Cindy's grade for assessment will not be much high.

The same logic applies to all other grading strategies, adequately. The conclusion is that the grade given by the best assessor does not need to be the one closest to the average as the assessment are compared at the level of individual responses, not the final grades.

Siehe auch

- Workshop module forum - Workshop-Forum im Kurs Using Moodle auf moodle.org

- where David explains a particular Workshop results - Diskussionsbeitrag im Kurs Using Moodle auf moodle.org

- Moodle Workshop 2.0 - a (simplified) explanation Präsentation von Mark Drechsler (englisch)

- A Brief Journey into the Moodle 2.0 Workshop

Examples:

Student can assess example submissions for practice before assessing their peers' work if this feature is enabled. They can compare their assessments with reference assessments made by the teacher. The grade will not be counted in the grade for assessment.

Teachers need to upload one or more example submissions and the corresponding reference assessment to support this function:

- Click the ‘Add example submission’ button in the first page of the workshop setup for the assignment.

- Click the ‘continue’ button and provide a corresponding reference assessment based on the assessment form. If you fail to provide corresponding reference assessment, you can assess the example submissions later by clicking ‘assess’ button in the first page of the workshop setup. Also, unassessed example submissions will be highlighted in pink by the Moodle system.

- Teachers can also edit the reference assessment later by clicking the ‘re-assess’ button in the first page.

--- Once the workshop has been made we can then set more settings relating to submissions. This is done through clicking on the menu highlighted below which is found when you click on the workshop’s link or after clicking “Save and Display” on completion of the workshop. To access the menu simply click on “Allocate Submissions”. It is highlighted in the picture by the red box.

Manual Allocation

In manual allocation menu once a student has submitted work the teacher can then choose which other students get to access their work. If the student has not submitted any work the teacher cannot assign other students to access the work. However without anything submitted the teacher can choose which students’ work this student will access.

Note that if a student has not submitted any work they can only be assigned to review other students who have submitted work and likewise other students cannot be assigned to review any student who hasn’t submitted any work.

Random Allocation

In random allocation the teacher is given 5 settings that determine how the random allocation will work.

- Number of reviews: Here the teacher picks between 0 and 30 reviews for either each submission or per reviewer. That is the teacher may choose to either set the number of reviews each submission must have or the number of reviews each student has to carry out

- Prevent Reviews: If the teacher wishes for students of the same group to never review each other’s work, as most likely it is their work too in a group submission, then they can check this box and moodle will ensure that they are only allocated other students out of their group’s work to access

- Remove current allocations: Checking this box means that any manual allocations that have been set in the Manual Allocation menu will be removed

- Can access with no submission: Having this box checked allows students to assess other students’ work without having already submitted their own work.

- Add self assessments: This options when checked make sure that as well as assessing other students’ work they must also assess their own. This is a good option to teach students how to be objective to their own work.

---After the workshop has been made, teachers can set more settings related to assessments-Edit assessment form, Grading evaluation settings and Workshop toolbox.

Editing assessment form

In order to set the criterion for an assignment, teachers need to fill out an assessment form during the setup phase. Students can view this assessment form in the submission phase and focus on what is important about the task when working on their assignment. In the next phase-the assessment phase, students will assess their peers’ work based on this assessment form.

According to the grading strategy chosen in the grading settings, teachers will get corresponding original assessment form to edit by clicking ‘Edit assessment form’ button in the first page of the workshop setup for the assignment. The grading strategy can be one of Accumulative grading, Comments, Number of errors or Rubrics. Teachers can set each criterion in detail in the assessment form.

Grade evaluation settings

In the grade evaluation phase, teachers can do specific settings for calculation of the grade for assessments.

- Grade calculation method:

This setting determines how to calculate grade for assessments. Currently there is only one option- comparison with the best assessment. For more detail, please refer to 4.2 Grade for assessment subsection of Workshop module.

First, Comparison with the best assessment method will try to imagine what a hypothetical absolutely fair assessment would look like.

For example, a teacher uses Number of errors as grading strategy to peer-assess one assignment. This strategy uses a couple of assertions and assessors just need to check if the given assertion is passed or failed. That is, they only need to choose ‘yes’ or ‘no’ for each criterion in the assessment form. In this case, there are three assessors, Alice, Bob and Cindy. And the assessment form contains three criteria. The author will get 100% grade if all the criteria are passed, 75% if two criteria are passed, 25% if only one criterion is passed and 0% if the assessor gives ‘no’ for all three assertions. Here are the assessments they give to one certain work:

Alice: yes/yes/no

Bob: yes/yes/no

Cindy: no/yes/yes

Then the best assessment will be:

Yes/yes/no

Second, workshop will give the best assessment 100% grade. Next it will measure the ‘distance’ from other assessments to this best assessment. The farther the distance, the lower grade the assessment will receive. And Comparison of assessments setting, next to the Grade evaluation setting, will determine how quickly the grade falls down if the assessment differs from the best one.

Note: Comparison with the best assessment method will compare responses to each individual criterion instead of comparing the final grades. In the example above, all of the three assessors give 75% to the submission. However, only Alice and Bob will get 100% grade for their assessments, while Cindy will get a lower grade. Because Alice and Bob agree in individual responses too, while the responses in Cindy’s assessment are different.

- Comparison of assessments:

This setting has 5 options: very lax, lax, fair, strict and very strict. It specifies how strict the comparison of assessment should be. By using comparison with the best assessment method, all assessments will be compared with the best assessment picked up by workshop. The more similar one assessment is with the best assessment, the higher grade this assessment will get, and vice versa. This setting determines how quickly the grades fall down when the assessments differ from the best assessment.

Workshop toolbox

- Clear all aggregated grades:

Clicking this button will reset aggregated grades for submission and grades for assessment. Teachers can re-calculate these grades from scratch in Grade evaluation phase.

- Clear assessments:

By clicking this button, grades for assessments along with grades for submission will be reset. The assessment form will remain the same but all the reviewers need to open the assessment form again and re-save it to get the given grades calculated again.